There are a number of ways for checking linear dependency. If there is linear dependency, the linear intersect is an answer.

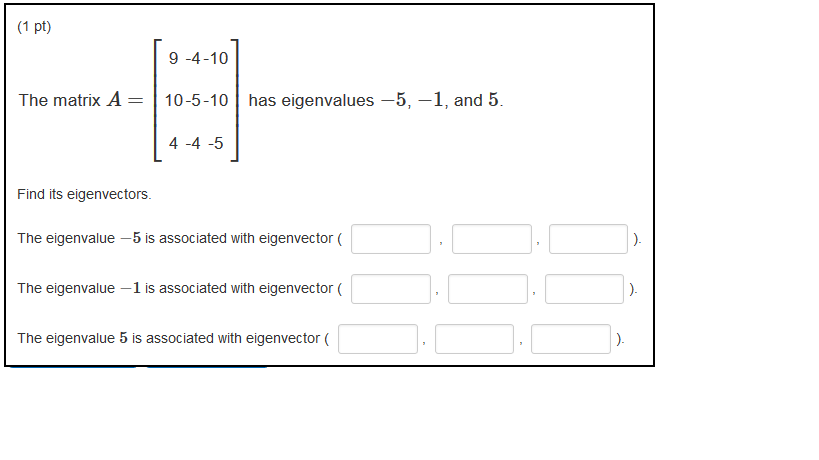

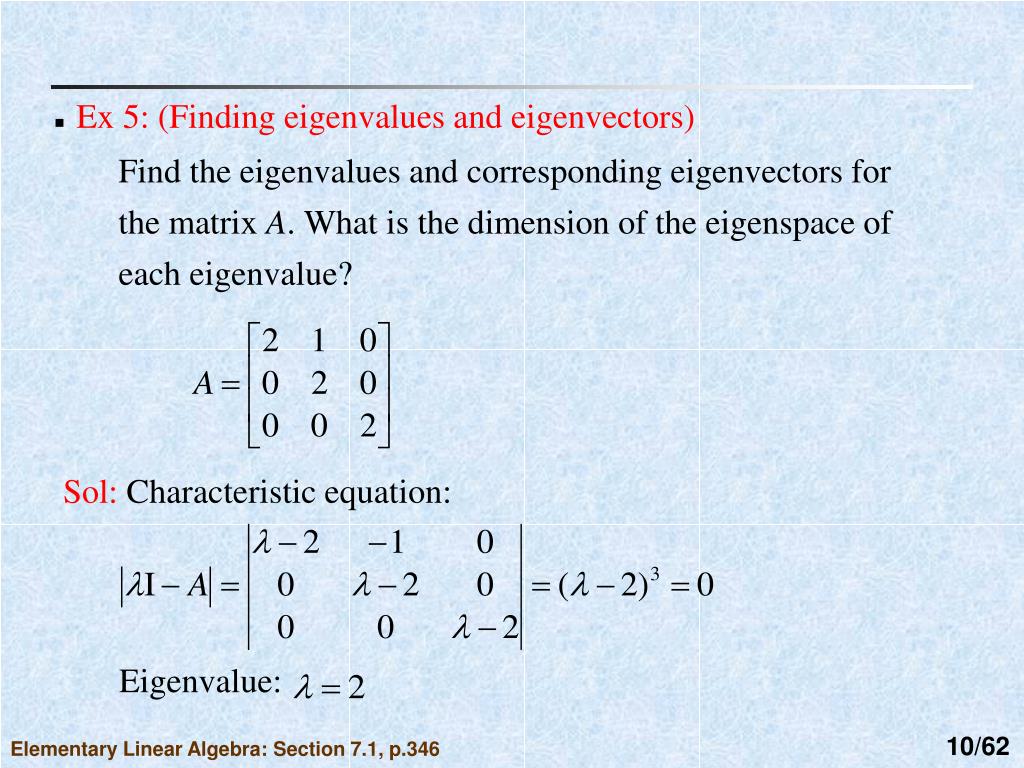

Next, for each pair of eigenspaces, you check for linear dependency. To complete the task of finding common eigenvectors, you do the above for both A and B. How to get linear intersect of (the span of) two sets Which makes sense because the 1st eigenspace is the one with eigenvalue 3 comprising of span of column 1 and 2 of W, and similarly for the 2nd space. In other words, if you check tol=sum(abs(A*V(:,i)-D(i)*V(:,i))) Where D(i), V(:,i) are the corresponding eigenpairs. So this is not meant to be a general numerical recipe. Not to mention, finding common eigenvectors may not require finding all eigenvectors. Matlab does provide methods for (efficiently) completing each step! Except of course step 3 involves checking linear dependency many many times, which in turn means we are likely doing unnecessary computation. Group the resultant eigenvectors by their eigenspaces.Ĭheck for intersection of the eigenspaces by checking linear dependency among the eigenvectors of A and B one pair eigenspaces at a time. Get eigenvectors/values for A and B respectively. We will assume the matrices A and B are square and diagonalizable. I'll just outline brute force way and do it in Matlab in order to highlight some of its eigenvector related methods. I don't think there is a built-in facility in Matlab for computing common eigenvalues of two matrices. The 2 Fisher matrices are available on these links : If I know common basis of eigen vectors P, I could deduce D1a and Da2 from D1 and D2, couldn't I ? To compute the new Fisher matrix F, I need to know P, assuming that D1a and D2a are equal respectively to D1 and D2 diagonal matrices (coming from diagonalization of A and B matrices) IMPORTANT REMARK : Maybe some of you didn't fully understand my goal.Ĭoncerning the common basis of eigen vectors, I am looking for a combination (vectorial or matricial) of V1 and V2, or directly using null operator on the 2 input Fisher marices, to build this new basis "P" in which, with others eigenvalues than known D1 and D2 (noted D1a and D2a), we could have : F = P (D1a+D2a) P^-1 Is there a way to increase the accuracy to minimize ‖(□□−□□)□‖ as much as possible ? So I extract the approximative eigen vectors V from : = svd(A*B-B*A) The SVD approach gives you a unit-vector □ that minimizes ‖(□□−□□)□‖ (with the constraint that ‖□‖=1)" The screen capture below shows that the kernel of commutator has to be different from null vector :ĮDIT 1: From maths exchange, one advices to use Singular values Decomposition (SVD) on the commutator, that is in Matlab doing by : How can I build these common eigenvectors and finding also the eigenvalues associated? I am a little lost between all the potential methods that exist to carry it out. In a first time, I prefer to conclude in Matlab as if it was a prototype, and after if it works, look for doing this synthesis with MKL or with Python functions. So surely, I must have done an error in my code snippet above. % Compute the final endomorphism : F = P D P^-1įISH_final = V*eye(7).*eigen_final*inv(V)īut the matrix FISH_final don't give good results since I can do other computations from this matrix FISH_final (this is actually a Fisher matrix) and the results of these computations are not valid. % Diagonalize the matrix (A B^-1) to compute Lambda since we have AX=Lambda B X I tried to use it like this : % Search for common build eigen vectors between FISH_sp and FISH_xc Particularly, I am interested by the eig(A,B) Matlab function. I have also read the wikipedia topic and this interesting paper but couldn't have to extract methods pretty easy to implement. I took a look in a similar post but had not managed to conclude, i.e having valid results when I build the final wanted endomorphism F defined by : F = P D P^-1 Where A and B are square and diagonalizable matrices. I am looking for finding or rather building common eigenvectors matrix X between 2 matrices A and B such as : AX=aX with "a" the diagonal matrix corresponding to the eigenvaluesīX=bX with "b" the diagonal matrix corresponding to the eigenvalues

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed